Contributed By: Pablo Gutierrez Felix; LFX Fall 2025 Mentee

Last semester, I contributed to the Open Quantum Safe Project through the LFX Mentorship Program, as a selected mentee focused on enhancing constant-time analysis tooling in liboqs. In this blog post, I share the outcomes and key reflections from the work I carried out over several months in collaboration with Basil Hess, my mentor throughout this fantastic experience.

1 What is the Open Quantum Safe project and liboqs?

Open Quantum Safe (OQS) is a project under the Post-Quantum Cryptography Alliance (PQCA), a Linux Foundation initiative that supports the global transition towards quantum-resistant cryptography. At its core, it comprises two main lines of work: liboqs, an open-source post-quantum cryptography library in C; and the development of protocol and application prototypes integrating post-quantum cryptography. As a result, the research community can have access to the cryptographic implementations and tools developed during the transition to standard use of post-quantum cryptography.

2 What was the objective of the mentorship?

The main goal of this mentorship was to evaluate and extend constant-time analysis tools for liboqs, and subsequently integrate them into CI for automation. As a result, the library can continuously check for constant-time issues as new cryptographic schemes are included over time, allowing the liboqs team to raise concerns to developers if needed.

My mentor outlined a clear pathway that guided me through the mentorship process, which helped me stay organized and keep the work on track. We first engaged in a review of the existing literature and methodologies for timing side-channel attacks, which was very helpful because I had no prior knowledge regarding the topic. From there, we evaluated several candidate tools, identified those that best fit the project’s needs, implemented them in liboqs, analyzed the resulting warnings in existing implementations, and integrated the framework into the CI pipeline.

3 What are timing attacks?

To bypass the security of a cryptographic algorithm, Side-Channel Attacks (SCAs) focus on the algorithm implementation rather than its underlying mathematical functioning. This is performed by collecting information leaked during the execution of the algorithm, such as the time it takes to execute, power consumption, electromagnetic emissions, or even sound. Specifically, this document focuses on timing attacks, which can be performed in various ways depending on what is being measured. Time leaks in cryptographic constructions mainly originate from the following three possible causes:

- Secret-dependent control flow: Using secret values in conditional branching or table lookups will provide attackers with insights regarding the secret key based on execution time. Therefore, the code used to implement cryptographic constructions must run in the same amount of time regardless of the secret key used as input. This practice is known as “constant-time coding”.

- Compiler optimization: Even if the code does not contain secret-dependent control flow, aggressive compiler optimizations might convert it to non-constant-time at the assembly level. This is especially the case when using aggressive compilation techniques with advanced compilers.

- Microarchitectural behavior: For certain CPU architectures, variable-latency instructions—such as bit-shifting (Intel Pentium 4), integer multiplication (ARM Cortex-M3), and integer division (most CPUs) — do not execute in constant time.

Thus, our goal was to build a framework that can spot where the post-quantum cryptographic implementations in liboqs may potentially reveal secret information at execution time originating from these timing-leak sources.

4 Framework Design

In the early stages of the mentorship, I studied the different options for constant-time tooling offered by this list of constant-timeness verification tools, weighing their characteristics and integrability with liboqs. The search was narrowed down to three tools that could potentially fit in the desired framework:

- memcheck (Valgrind): This tool identifies memory leaks and uninitialized memory (among other common memory management problems) in C and C++ programs. Fortunately, checking for constant-time issues in cryptographic implementations requires the same process performed by MemCheck when it is trying to spot the use of uninitialized memory, as Adam Langley suggested with ctgrind. This is because MemCheck reports the use of uninitialized memory in conditional branches and table lookups, which are delicate scenarios for constant-time behavior. Therefore, by marking secret data as uninitialized, Valgrind will output all components that may be subject to timing-side-channel attacks within the cryptographic implementation. Moreover, recently, researchers have developed a new Valgrind patch that enables variable-latency error detection, an issue that has been found in Kyber’s post-quantum algorithm family known as Kyberslash.

- MemSan: A runtime memory error detector operating at the LLVM-IR level native to the clang compiler. Similar to Valgrind’s memcheck, it can also be used as a constant-time analysis tool under certain scenarios. Moreover, it allows for multi-platform testing, key for our testing framework since Valgrind-Varlat only covers a clearly defined set of platforms. Unlike Valgrind-Varlat, which directly operates on binaries, MemSan requires compiling the executable with specific clang options in order to perform the constant-time analysis.

- CT-Prover: This constant-time analysis tool is a novel approach (from 2024) based on LLVM-IR, which can be accessed through the project’s GitHub repository. It employs external tools that assist in performing a self-composition approach, which reduces constant-time analysis of cryptographic implementations to safety verification of the primitives by duplicating the program and verifying whether the secret values affect the execution trace using symbolic and formal representation.

After careful review and multiple implementation attempts, it became clear that CT-Prover has not been maintained recently and depends on specific older versions of external tools. It is also tightly attached to the hard-coded paths and submodules of older versions of these tools, resulting in incompatibilities with the current upstream releases and causing build failures in the older version. As a result, making CT-Prover operational for constant–time analysis of liboqs required extensive dependency work, debugging, and testing that would significantly slow down our timeline. As a result, Valgrind’s memcheck (from now on referred to as Valgrind-Varlat because we implemented Kyberslash’s patch) and MemSan became the final tools selected to implement the constant-time analysis framework for liboqs.

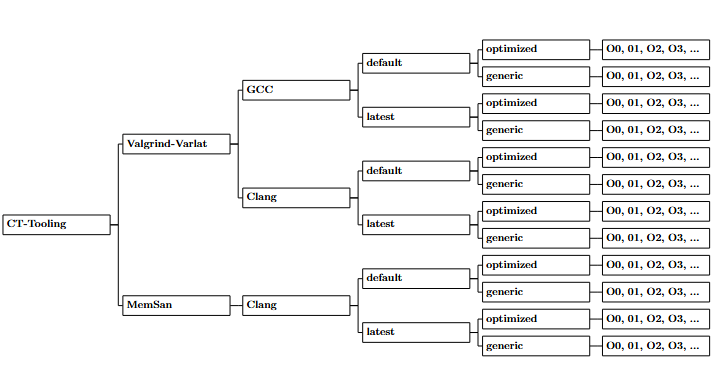

Once the tools comprising the framework were selected, we needed to define a robust testing setup that could provide meaningful insights across the wide range of possible compilation configurations typical in open–source projects. Therefore, three main variables were taken into account during testing: compiler versions, liboqs build types, and optimization flags used during compilation. Valgrind-Varlat tests are executed on the GCC and Clang compilers, while MemSan runs on Clang, the two most widely used C and C++ compilers available. Furthermore, two versions of each compiler were used during testing, since different compiler versions may produce non-constant time behavior as outlined in Clangover’s PoC. The “default” compiler version available (gcc-13 and clang-18), and their latest version available up to date (gcc-14 and clang-20) were those selected for testing.

The liboqs library can be built by specifying whether or not it is desired to use CPU optimizations during compilation. This can be implemented by setting the -DOQS OPT TARGET flag to generic if no optimizations are desired, or auto if CPU optimizations are requested.

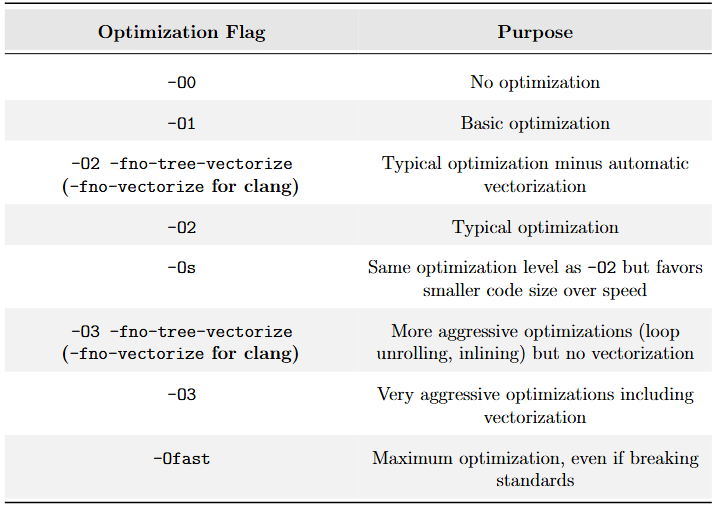

As I talked about earlier, the optimization flag used during compilation can have a negative impact on constant-time code optimization by the compiler. As a result, we selected a wide range of these optimization flags during testing. The selected optimization flags used in the experiments are shown in Table 1, ranging from no compiler optimizations to more aggressive techniques:

Consequently, the framework’s hierarchy is as follows: the first level differentiates between tools, the second between compilers, the third between compiler versions, the fourth between liboqs build options, and the fifth between optimization flags used during compilation. For each optimized/generic combination, all the optimization flags were tested. Some were omitted in Figure 1 below for brevity:

Table 1: List of optimization flags used in experiments.

Throughout the experiments, this framework was executed on all the enabled algorithms within liboqs. However, likely due to the inherent design of the algorithm (hash-based signatures), we noticed that several variants of certain digital signatures took an excessive time to process the constant-time tests, slowing the experimentation process. As a result, the signature algorithms based on SPHINCS+ and SLH-DSA were excluded from these constant-time tests.

Figure 1: Testing setup for constant-time analysis of liboqs

5 Our Results

The amount of data gathered by the framework is too large to go in detail in this blog post, but I will outline some of the key findings and interesting insights that were obtained during the experimentation process so as to provide an overall idea of the results obtained. Specifically, we will look at some of the clang-default results for Valgrind-Varlat and MemSan.

First of all, the results obtained from implementing the framework on liboqs can help users identify potential timing-leak scenarios, but we quickly understood that the final warning totals can include false positives. For instance, some warnings may be triggered by rejection-sampling patterns in subroutines (as in the case of ML-KEM and ML-DSA), and similar effects may appear across the different algorithm variants. Therefore, the absolute number of warnings is less informative than the relative differences observed across compilers, optimization flags, and the other configuration options in our testing setup.

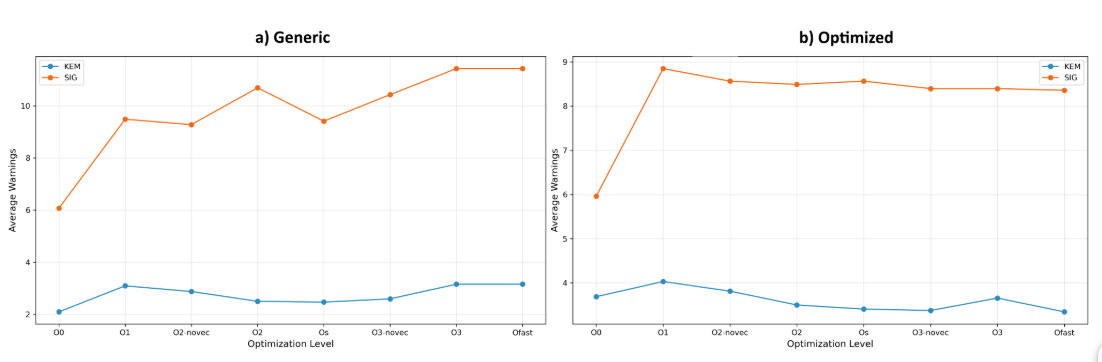

Since liboqs includes more signature algorithms than KEMs, we analyzed both the total number of warnings across algorithm families (KEMs and signatures) and the average number of warnings per variant of each family. This approach helps identify which algorithm type generates more constant-time warnings—and therefore requires greater attention from the maintainers—while also providing insights on whether an algorithm family has a tendency to output more constant-time warnings than the other. The results obtained show that, overall, signatures output more total and average warnings than KEMs. This may be due to the internal processing of signature schemes, where they often invoke the same routines flagged as “non-constant-time” multiple times within a single signing or verification run, whereas KEM implementations typically exhibit fewer repeated calls to such routines.

Interestingly, across compiler versions, we observed only minor differences in the number of warnings, suggesting that the overall findings are stable but not perfectly invariant to compiler updates. Nonetheless, future tests will be executed on older compiler versions to understand if this behavior changes.

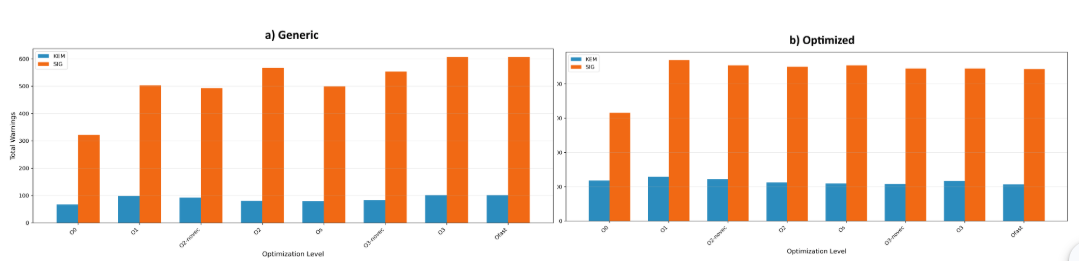

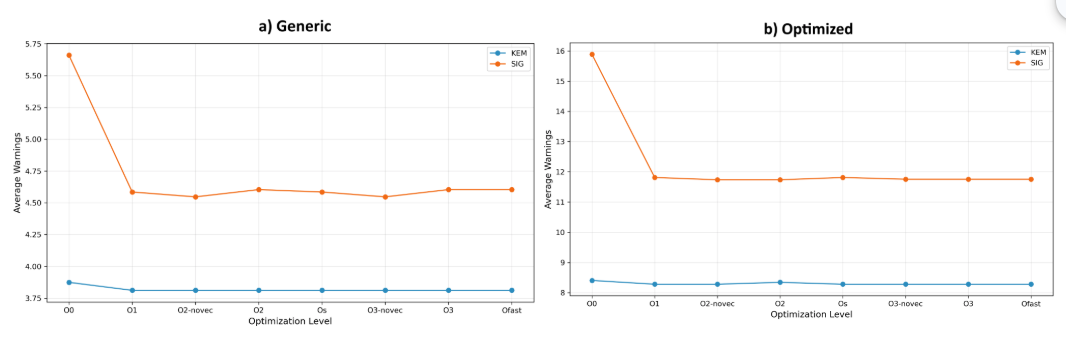

The warnings retrieved for Valgrind-Varlat clang-default testing suggest that compiling under the generic build, a steady increase in warnings can be perceived as the optimization flag used is more aggressive (see Figures 2 and 3 below). This behavior is especially highlighted in signatures, while it is more moderate for KEMs, suggesting the hypothesis that compilers have a tendency to optimize away constant-time code when aggressive optimization flags are in place. In contrast, when compiling using the optimized build, a significant increase in warnings occurs when compiling with O0 and O1, which is maintained along the rest the compilation flags. A plausible theory is that optimized implementations rely on assembly code that the compiler will not optimize, leaving the total warning count steady throughout the different optimization flags tested.

Figure 2: Valgrind-Varlat average KEM and SIG warnings per optimization level compiled with clang-18.

Figure 3: Valgrind-Varlat total KEM and SIG warnings per optimization level compiled with clang-18.

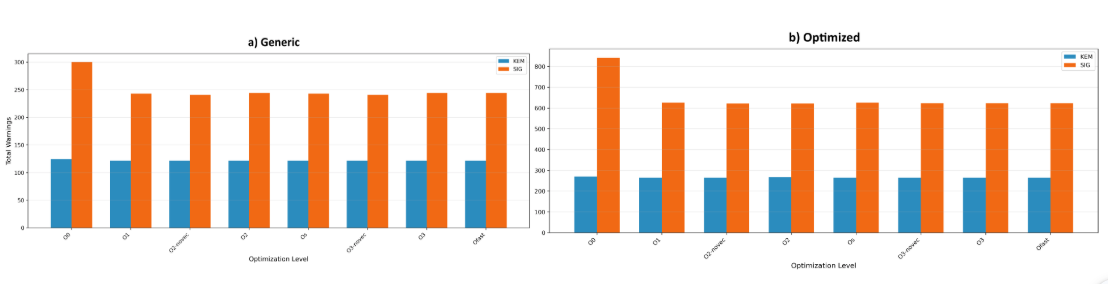

MemSan’s result for the same testing parameters are quite different. They show a steady warning count throughout different optimization flags for KEMs and SIGs under both generic and optimized builds. This excepts the -O0 optimization flag, which clearly outputs significantly more warnings than the rest of flags. Clang’s documentation suggests using -O1 or higher “for reasonable performance”, which may be an indicator of this behavior. The subsequent revision of the warnings found will determine which of the found warnings can be categorized as actual vulnerabilities. Furthermore, Figures 4 and 5 suggest that MemSan located a significant increase in warnings under the optimized build compared to the generic build for both KEMs and SIGs, but particularly accentuated in the latter.

Figure 4: MemSan average KEM and SIG warnings per optimization level compiled with clang-18.

Figure 5: MemSan total KEM and SIG warnings per optimization level compiled with clang-18.

Note that all the warnings are systematically stored in log files, which can be accessed by maintainers to triage, analyze, and confirm whether they are actual timing leaks. In fact, this is how we successfully located a timing leak in NTRU Prime (sntrup in liboqs), the algorithm used for post-quantum SSH, where we spotted the int16 nonzero mask function. It was located using Valgrind-Varlat for the clang-20 generic build compiled with -O3. It was then replicated and found on clang-13 and clang-19. This issue seems to have been addressed in SUPERCOP and OpenSSH, but liboqs was using an outdated implementation which is now being updated. Nonetheless, this proves this framework to be helpful in finding constant-time vulnerabilities in the post-quantum algorithms within liboqs.

At the moment, we are preparing a PR that integrates this framework into liboqs for CI and local testing. But first, we are carefully analyzing the warnings retrieved by the framework so that we can make the tooling disregard those that we have acknowledged to be false-positives, and subsequently enable constant-time analysis of the algorithms one at a time (once all warnings are reviewed and false-positives are identified).

6 Conclusion

The framework developed throughout the course of this mentorship proved effective in uncovering constant-time irregularities and providing meaningful comparative data across algorithm families, compilers, and build configurations. Although many detected warnings may correspond to benign patterns or false positives, the relative analyses revealed clear tendencies, particularly the higher incidence of constant-time warnings in signature schemes. These findings validate the usefulness of the framework as a diagnostic and prioritization tool for maintainers, guiding future constant-time verification efforts within liboqs.

Beyond the technical outcomes, this mentorship program was an outstanding experience that I recommend to open-source enthusiasts. It introduced me to a field within the cryptographic community that had yet to explore, sparking a lasting personal and academic interest that I plan to keep developing in the years ahead. Most of all, the chance to contribute to one of the most impactful open–source projects in post–quantum cryptography is something I will never forget.

I want to thank my mentor Basil Hess for his guidance, support, and thorough reviews, all of which were instrumental to the project’s success. I also wanted to thank Douglas Stebila for kindly introducing me to the project, and for his invaluable insights upon the finalization of the mentorship.